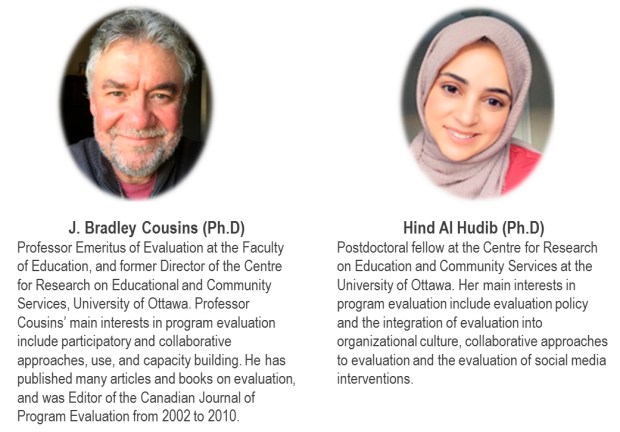

By J. Bradley Cousins and Hind Al Hudib

University of Ottawa, Canada

EvalParticipativa – what an amazing space! We are longtime fans, researchers, and purveyors of participatory evaluation but in our experience, EvalParticipativa is unparalleled as a space for professional exchange, capacity building, and learning in this domain. It is our very great honour to contribute to, and become part of, the EvalParticipativa community.

Participatory evaluation (PE) has been near and dear to our hearts for a very long time. One of us (Cousins) has been writing about this topic for almost 3 decades. While our contributions have been mostly research on PE, we’ve always had an interest in translating research-based knowledge into practice. What an amazing opportunity EvalParticipativa provides in this regard! But perhaps even more compelling is the reverse; what a fabulous opportunity to turn expert practice into research! Lessons learned will surly advance evaluation theory and practice.

Participatory evaluation (PE) has been near and dear to our hearts for a very long time. One of us (Cousins) has been writing about this topic for almost 3 decades. While our contributions have been mostly research on PE, we’ve always had an interest in translating research-based knowledge into practice. What an amazing opportunity EvalParticipativa provides in this regard! But perhaps even more compelling is the reverse; what a fabulous opportunity to turn expert practice into research! Lessons learned will surly advance evaluation theory and practice.

The foundational definition of PE, as has been made abundantly clear on this website, implicates trained evaluators working in partnership with program community members to produce evaluative knowledge. Program stakeholders are not merely consulted for their views and opinions but they actually engage as members of the evaluation team. What we would like to do in this short article is to situate PE more broadly within the evaluation landscape and consider the implications of doing so for practice.

Over time, we have come to understand PE to be a member of the family of evaluation types that we call collaborative approaches to evaluation (CAE). All members of this family – e.g., practical PE, transformative PE, empowerment evaluation, participatory action research, democratic evaluation, most significant change technique, utilization-focused evaluation – share the same foundational definition mentioned above. This basic definitional feature distinguishes CAE from more traditional, mainstream, evaluator-directed approaches to evaluation.

Why do it? From our perspective, the predominant goals or justifications for CAE are pragmatic (solve program problems, improve programs), political (ameliorate social injustice), philosophical (develop deep, context-relevant understanding), and ethical (assure fairness). Any justification for CAE will draw from any or all of these categories but generally, there will be emphasis on one or two. For example, practical PE is very much geared to enhancing the use of evaluation to leverage program change and to augment learning about the program and its context. It will draw mostly from the pragmatic justification but the other categories are implicated as well. On the other hand, transformative PE, which is mostly about social inclusion and empowering marginalized groups, is very much reliant on the political justification but also depends to some extent on the other categories too.

What does it look like in practice? We know that CAE can take many different forms, but these forms can be described by three fundamental dimensions:

-

-

-

- control of technical evaluation decision making (Is it the evaluator who decides? Stakeholders? A mix?);

- stakeholder diversity (Is it a narrow group of stakeholders who participate? A wider range of program community members? Somewhere in the middle?); and

- depth of participation (Is it engaged consultation? Or do stakeholders participate in most or all methodological aspects of the evaluation?).

-

-

As an example, practical PE (i) is often co-directed by the evaluator(s) and participating stakeholders, and (ii) usually engages only a small group of stakeholders – those who have the power to make actual changes to the program (e.g., program developers, managers). Generally, (iii) stakeholders are deeply involved in all or most aspects of the evaluation methodology in practical PE.

As an example, practical PE (i) is often co-directed by the evaluator(s) and participating stakeholders, and (ii) usually engages only a small group of stakeholders – those who have the power to make actual changes to the program (e.g., program developers, managers). Generally, (iii) stakeholders are deeply involved in all or most aspects of the evaluation methodology in practical PE.

On the other hand, while control may remain balanced, transformative PE is more likely to involve a wider range of stakeholders, including intended program beneficiaries, and may or may not implicate them in all aspects of the evaluation.

Principles to Guide Practice: Our premise is that the goals and specific processes of CAE ought to arise from an analysis of the program’s contextual factors and influences, be they social, historic, economic, or political. CAE is about approaches to evaluation, as opposed to models or prototypes that are intended to be force-fitted into particular evaluation requests or challenges. Any member of the CAE family may be selected for application in any given program evaluation circumstance; or, some alternative approach that adheres to CAE’s basic definition could be developed in context.

To help guide CAE decision making and practical application, we systematically developed and validated a set of overarching principles that cover all family members. These principles are most usefully applied as a set (as opposed to a pick-and-choose menu); they are interconnected and only loosely temporal in sequence. The principles and suggested practical questions associated with each appear below.

To help guide CAE decision making and practical application, we systematically developed and validated a set of overarching principles that cover all family members. These principles are most usefully applied as a set (as opposed to a pick-and-choose menu); they are interconnected and only loosely temporal in sequence. The principles and suggested practical questions associated with each appear below.

This set of evidence-based principles is entirely useful for planning, developing and implementing CAE but it could also be used in other ways, such as designing training for evaluators and program community members, retrospective review of completed evaluations, and informing organizational evaluation policy development. The set could also provide a valuable conceptual frame for ongoing research and inquiry in evaluation.

This set of evidence-based principles is entirely useful for planning, developing and implementing CAE but it could also be used in other ways, such as designing training for evaluators and program community members, retrospective review of completed evaluations, and informing organizational evaluation policy development. The set could also provide a valuable conceptual frame for ongoing research and inquiry in evaluation.

To what extent do the principles resonate with the goals, objectives, activities, and products of EvalParticipativa? Can you imagine how these principles may have played out with some of the fabulous projects and learning experiences that have been posted on the website? Can you envision using the principles to guide your own PE or other CAE-related projects? We hope that you will find them beneficial and that through application and use they would prove to be of considerable value to this amazing community. Bravo EvalParticipativa!

References

- Cousins, J. B., & Chouinard, J. A. (2012). Participatory evaluation up close: A review and integration of the research base. Charlotte, NC: Information Age Press.

- Cousins, J. B., Whitmore, E., & Shulha, L. M. (2013). Arguments for a common set of principles for collaborative inquiry in evaluation. American Journal of Evaluation, 34(1), 7-22. doi: 10.1177/1098214012464037

- Cousins, J. B., Shulha, L. M., Whitmore, E., Gilbert, N., & Al Hudib, H. (2015). Evidence-based principles to guide collaborative approaches to evaluation. CRECS Ten Minute Window, 3(4)

- Cousins, J. B., Shulha, L. M., Whitmore, E., Al Hudib, H., & Gilbert, N. (2016). How do evaluators differentiate successful from less-than-successful experiences with collaborative approaches to evaluation? Evaluation Review, 40(1), 3-28. doi: 10.1177/0193841X16637950

- Shulha, L. M., Whitmore, E., Cousins, J. B., Gilbert, N., & Al Hudib, H. (2016). Introducing evidence-based principles to guide collaborative approaches to evaluation: Results of an empirical process. American Journal of Evaluation, 37(2), 193-215. doi: 10.1177/1098214015615230

- Whitmore, E., Al Hudib, H., Cousins, J. B., Gilbert, N., & Shulha, L. M. (2017). Reflections on the meanings of success in collaborative approaches to evaluation: Results of an empirical study. Canadian Journal of Program Evaluation, 31(3), 328-349. doi: 10.3138/cjpe.335

- Cousins, J. B. (Ed.). (2020). Collaborative approaches to evaluation: Principles in use. Thousand Oaks, CA: SAGE