by Marina Apgar

While celebrating a greater openness toward participatory evaluation (PE), many evaluators continue to adhere to traditional ways of understanding “rigour”. Within these frameworks, quality standards are based on the supposed existence of a methodological hierarchy, in which objective and quantitative methods are placed higher than others, considered to be less “rigorous”.

While celebrating a greater openness toward participatory evaluation (PE), many evaluators continue to adhere to traditional ways of understanding “rigour”. Within these frameworks, quality standards are based on the supposed existence of a methodological hierarchy, in which objective and quantitative methods are placed higher than others, considered to be less “rigorous”.

These traditional approaches to rigour are manifested in evaluation designs that select one central method —which may be quantitative or qualitative— and add other less important methods if required, creating a mixed methods approach. If we follow this approach, our role as evaluators is to faithfully and strictly apply a protocol based on the standards established by our central methodology. In this context, participatory methods are considered to be weak, lacking in rigour and prone to bias. The only way to overcome their perceived weakness is to add “objective” methods to increase the “rigour” of the participatory design and so minimise its bias.

This traditional way of looking at rigour does not allow for a true and useful exploration of quality in a PE process. But, fortunately, there are alternatives! Robert Chambers created one which he called “inclusive rigour“ and which was intended to build a more inclusive research practice in conditions of complexity.

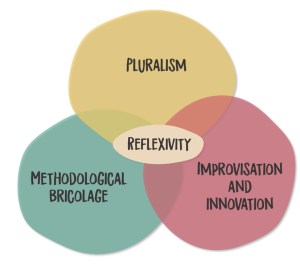

If we adapt the work of Chambers, we can identify four principles that form a radically different vision of rigour in the PE context.

Pluralism. The equality of the evaluation should be based on making the best use of a broad range of knowledges. In this way, the process of change (and the results obtained) can be understood from the multiple, different and sometimes contradictory perspectives of stakeholders, and the most marginalised stakeholders are guaranteed the opportunity to contribute. Pluralism necessitates the use of a variety of collective analytical methodologies to facilitate a collective understanding of the results that emerge.

Pluralism. The equality of the evaluation should be based on making the best use of a broad range of knowledges. In this way, the process of change (and the results obtained) can be understood from the multiple, different and sometimes contradictory perspectives of stakeholders, and the most marginalised stakeholders are guaranteed the opportunity to contribute. Pluralism necessitates the use of a variety of collective analytical methodologies to facilitate a collective understanding of the results that emerge.

Improvisation and innovation. Fostering a truly participatory process with key stakeholders implies embracing uncertainty in order to be relevant to any particular context in which various versions of reality are expressed. Relevant measures of success are discovered through a process that is open to uncertainty, utilising improvisation and innovation with stakeholders along the way.

Methodological bricolage. When the above two principles are followed, there surfaces the need to combine different methods in a creative way and improvise collectively within the context. This means mixing methods in order to find the best combination rather than simply adding one to another.

Reflexivity. With this principle, the quality of the process is determined by who we are as evaluators when we implement PE. Our own ways of thinking and working allow (or do not allow) us to understand and manage power dynamics. The capacity to use reflexivity is built through experience —it is a praxis.

Inclusive rigour in practice

The CLARISSA programme is implemented by a consortium of partners who are experts on child labour and youth, adolescent and child participation in Bangladesh and Nepal. It is a systemic action research programme that facilitates participatory action research with children involved in the worst forms of child labour (in the leather industry in Bangladesh and the adult entertainment sector in Nepal). The children analyse their own situations and seek solutions to the systemic dynamics that generate conditions of exploitation. The focus of the programme —the worst forms of child labour— is a complex problem. We do not understand the systemic dynamics that drive child labour and so we cannot know a priori what the solutions will be. These solutions emerge from the participatory implementation process.

The principles of inclusive rigour are put into practice in CLARISSA through a participatory adaptive approach. The adaptive management of development programmes is not a new concept, but CLARISSA goes beyond common ‘problem driven’ approaches towards ‘people driven’ approaches focused on the participation and inclusion of marginalised people.

The principles of inclusive rigour are put into practice in CLARISSA through a participatory adaptive approach. The adaptive management of development programmes is not a new concept, but CLARISSA goes beyond common ‘problem driven’ approaches towards ‘people driven’ approaches focused on the participation and inclusion of marginalised people.

When we use this participatory approach, evaluation becomes a central part of the intervention itself: we evaluate from the inside, not from the outside. As evaluators, we become part of the implementation team and foster pluralism as we walk alongside the participants, and analyse their experiences and knowledge.

We did not use a logical framework in our monitoring and evaluation system so we could remain open to uncertainty. We agreed on an approach with the donor that allowed us to design the evaluation as we went along, based on a reflexive use of the theory of change. As we work with the key stakeholders and understand the particularities of their realities, we develop more specific theories of change to evaluate how the change we seek is being achieved and respond to our main evaluation question, namely: ‘What contribution are we making to finding solutions to the worst forms of child labour?’

This sometimes requires us to make radical adaptions. For example, we began with the intention of focusing on the “supply chains” and we assumed this meant working with multinational companies and well-known brands. However, we soon discovered that most child labour in the leather industry in Bangladesh takes place in informal, hidden spaces where small family-run businesses employ children. This required a re-design and, as a result, building more detailed theories of change and working with small-business owners. This significant adaptation at the beginning of the programme was only possible because we were not following a logical framework with success measures or predefined indicators.

Methodological bricolage

It is facilitated by the use of Contribution Analysis —an evaluation approach whose theory permits the use of participatory methods. For example, we have carried out a rapid realist review in order to understand how participatory action and research generate innovation from a realist perspective.

It is facilitated by the use of Contribution Analysis —an evaluation approach whose theory permits the use of participatory methods. For example, we have carried out a rapid realist review in order to understand how participatory action and research generate innovation from a realist perspective.

Following the literature review, we are now using participatory methods to investigate the process from the inside, with the key stakeholders as participants. Furthermore, in order to detect and investigate changes emerging beyond the participatory action and research groups —for example, in the production sectors— we will combine Outcome Harvesting with Process Tracing to deepen our understanding of the project’s contribution to broader changes.

Finally, we are intentionally cultivating the principle of reflexivity by facilitating collective and individual moments of reflection and learning along the way. We, therefore, facilitate after action reviews every six months to institutionalise and foster a culture of reflexivity.

To apply inclusive rigour, the evaluation design must be integrated into the development intervention so that we are not tied too quickly to a static results framework. We must also seek to use a combination of methods, based on both theory and experience.

This contribution was presented at the session organized by EvalParticipativa for the Participatory Action Research and Evaluation Conference (PAREC) in April 2022.

I looked back at the dictionary definition of rigour. https://www.merriam-webster.com/dictionary/rigor Pls have a look at it as well! Do we really want to make “rigour” (and its implications) as the main driver of evidence generation? Thanks to the authors for reminding of the importance of other approaches -allowing for insights, quality, plurality…. Approaches that do not straightjacket understanding as only “rigour”. Rigour has a space, of course. But making it the entry point does not allow us to understand where strict rigour should be best applied.