Thoughts on rigour in Participatory Evaluation practice and training

by Esteban Tapella and Andrea Meneses

For almost ten years, we have been running participatory evaluation (PE) courses and workshops for a range of sectors. Some training events have been one-off introductory sessions limited to a single webinar, while others have been in-person workshops that have gone into much more detail over three to five days. The increase in interest in postgraduate PE training is a much more recent phenomenon and members of the EvalParticipativa team have taught at various universities in Latin America and Europe.

For almost ten years, we have been running participatory evaluation (PE) courses and workshops for a range of sectors. Some training events have been one-off introductory sessions limited to a single webinar, while others have been in-person workshops that have gone into much more detail over three to five days. The increase in interest in postgraduate PE training is a much more recent phenomenon and members of the EvalParticipativa team have taught at various universities in Latin America and Europe.

In these training events, we are repeatedly asked to teach on the following two topics: the first is practical; participants want to hear case studies and examples; and the second is that we broach methodological issues including the rigour and credibility of PE. In this article, we are going to share some thoughts about this second aspect because we think it is relevant to postgraduate academic training and therefore worth exploring further.

THE EPISTEMOLOGY OF PARTICIPATORY EVALUATION: A UNIQUE VIEWPOINT

Several intellectual traditions and disciplines have sought to understand reality and how we can understand the nature and meaning of reality. Every evaluation should be explicit about its assumptions, whether ontological (what is happening), epistemological (how we know what is happening) or methodological (how we can access this reality), given that evaluation practice is based on these assumptions. It would be a great starting point if postgraduate PE content and curricula recognised this.

In PE, interpretative and qualitative approaches take precedence. From our perspective, these kinds of approaches share three elements: (a) an interest in understanding the different ways in which the social world can be interpreted, understood, experienced and produced; (b) data generation methods which are flexible and sensitive to the social, political and cultural context; and (c) a desire to understand complexity, a plurality of voices and the reality at hand through the methods of analysis and explanation chosen.

In this sense, PE is above all an interpretative approach which involves certain implications. These include an immersion in the daily lives of the people affected, an appreciation for the perspectives held by participants regarding their own contexts, practices and subjectivity, and a recognition of the weight of different interactions between social actors during the evaluation process. This process involves both evaluators and participants, and uses their words and actions as primary ‘data’ throughout.

In this sense, PE is above all an interpretative approach which involves certain implications. These include an immersion in the daily lives of the people affected, an appreciation for the perspectives held by participants regarding their own contexts, practices and subjectivity, and a recognition of the weight of different interactions between social actors during the evaluation process. This process involves both evaluators and participants, and uses their words and actions as primary ‘data’ throughout.

All this leads us to conclude that PE is not about verifying a theory or hypothesis (as is the case in positivist approaches or experimental designs) but rather, we work to discover and collectively construct knowledge from the situations we are evaluating.

In PE, we seek to respond to questions such as ‘how?’ and ‘why?’ and our focus is on understanding the complexity of the social processes, power dynamics and social context of the intervention (plan, programme or project). Thus, our approach goes beyond asking ‘how many?’ or ‘what percentage?’ in order to ask ‘how did it happen?’, ‘why did it happen?’, ‘what is significant according to varied perspectives?’ or ‘what are the implications of…?’.

In PE, the evaluation perspective seeks to identify and explain the ‘contributions’ that an intervention has made to certain changes rather than the changes that can be ‘attributed’ to the intervention. To summarise, we are not looking for a statistical representation that enables us to generalise the results (in terms of quantitative magnitude of the sample). Instead, we focus on its meaningful representation, or the contribution different social actors believe it has made to particular changes they have seen. We thus hope to identify facts, experiences, realities and relevant cases in order to reveal a relevant network of social relationships linked to the intervention.

SO, HOW SHOULD WE APPROACH RIGOUR FROM THIS PERSPECTIVE?

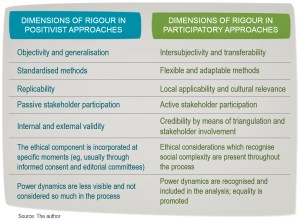

Traditionally, when we talk about rigour we think about certain principles or categories, such as objectivity, standardisation, replicability, generalisation, validity etc. However, in PE we should not think about rigour in terms of the ontological, epistemological and methodological that classical evaluations, based on experimental designs, often use to guide impact evaluations (the positivist paradigm). As we stated in the previous section, in PE we focus on discovering the meaning that social actors give to the intervention and their relationship to it. We focus more on depth than breadth, and we seek to capture the different nuances highlighted by participants.

In other words, the focus is on understanding and on recognising similarities, differences, tensions and interrelationships in order to contribute new perspectives on contexts and situations. This implies consistent communication and interaction between the facilitator and participants and the reality they inhabit. Therefore, far from seeking some presumed objectivity, we begin by valuing and recognising the intersubjectivities, feelings and observations of the different actors. We aim to capture their values, social norms, cultures, identities and discussions.

This encourages us to pursue designs that are flexible, customised and context-sensitive, all of which differentiates PE from classical impact evaluations. In PE, neither the instruments nor the samples are defined a priori. Rather, they are gradually selected and constructed during the evaluation process. As this advances, we can make adjustments and variations which include the addition of new case studies, the posing of new questions or the modification of existing ones, or the use of different combinations of tools.

At the heart of every PE is the idea that an intervention cannot be infallibly ‘known’, let alone ‘assessed’; we can only aspire to reflect multiple interdependent sources of knowledge. Triangulation is an important concept in PE.

At the heart of every PE is the idea that an intervention cannot be infallibly ‘known’, let alone ‘assessed’; we can only aspire to reflect multiple interdependent sources of knowledge. Triangulation is an important concept in PE.

It uses a combination of sources, tools and perspectives that can highlight different aspects of the same situation to establish its precise ‘location’ in any given context. In PE, triangulation helps overcome biases that can come from only appreciating one way of knowing and seeing, valuing, feeling and perceiving an intervention. It is not just that different approaches are used, but rather that they are also used in an integrated manner.

We can identify at least four forms of triangulation: of viewpoints, sources, theories and tools:

-

- The triangulation of viewpoints is perhaps the most obvious form used in PE as it examines a situation from the multiple viewpoints of the different observers (the different social actors that we involve in the evaluation process). Taking into account multiple viewpoints contributes to the reliability of the findings as it ensures that different social actors participate and discuss the same object (the intervention and its nuances).

- The triangulation of sources refers to the different ways that information can be combined. This may refer to the information that comes from different people within the same context, or it may explore a phenomenon that affects different spaces and thus combine viewpoints from different geographical areas. In addition, it can refer to the combination of information gathered at different moments of the intervention under evaluation.

- The triangulation of tools is perhaps the most widely used in PE. The basic assumption behind this type of triangulation is that the weaknesses of one particular tool (eg, an interview or questionnaire) can be offset by the strengths of another (focus group, community workshop, simulation game etc.)

- Lastly, while it may not be as visible in PE, the triangulation of theories or paradigms refers to the use of different conceptual frameworks to understand a phenomenon or account for an object under study.

The triangulation of viewpoints, despite sounding simple, is in practice fairly complex. This is especially true if we want our evaluations to be rigorous. From our experience, the most commonly encountered difficulties relate to the identification of the different social actors and their representativeness. In other words, who will we involve and how? When we look at PE in practice, we do not always find a good key stakeholder map (KSM).

There are three fundamental reasons why a good KSM is critical: (a) the normative argument insists that transparency, democratisation and human rights should be integral to evaluations and decision-making processes that might affect the intervention and/or the people associated with it; (b) the substantive argument refers to the participation of social actors as a way of improving our understanding of complex situations involving multiple perspectives on specific topics; (c) the instrumental argument refers to the opportunity to create spaces for dialogue for social actors who may hold opposing viewpoints as a way not only of identifying differences but also resolving potential conflicts of interest and tensions.

At times, the identification of stakeholders is ambiguous and involves duplications. In other words, only those stakeholders with similar opinions are heard while those with opposing strategies and interests are sidelined. It can also be difficult to select the stakeholders that are relevant to the intervention. At times they have overlapping roles or it is simply complicated to clearly identify their actions with regard to the programme under evaluation or the topic of interest. Other difficulties and risks emerge when working with multiple stakeholders linked to an intervention. These include changes in perspective among stakeholders over time; the absence of stakeholders who were already under-represented or not well understood; tensions, conflicts and power dynamics; the use of an overly simplistic and rigid classification systems; the prioritisation of stakeholders who are ‘easy’ to work with rather than others who are more ‘elusive’ (a practice that is frequently observed).

Throughout any rigorous participatory evaluation, we should also consider ethical issues and inclusion when analysing the power dynamics that underlie every social intervention. For example, the gender perspective should be refined at each stage of the evaluation, any situations of discrimination, exclusion or promotion should be identified and analysed, and practice should reflect equality and inclusion.

When we think about rigour from the participatory perspective, it is likely to include many principles and dimensions. We think it is particularly important to highlight the following seven aspects that we have alluded to in previous paragraphs.

FINAL CONSIDERATIONS

Many PE training programmes have followed the approach proposed in our handbook Sowing & Harvesting. Chapter 2 discusses the origins of PE and its different currents, the ‘what’ or conceptualisation of the approach, and the role of civil society participation. Chapter 3 focuses on the process, the ‘how’ or evaluation methods that involve civil society participation. Chapter 4 is intended to describe the role of evaluators who facilitate these evaluation processes. The final chapter describes and classifies tools and provides some recommendations on how to select, create and use participatory tools. This provides a valuable introduction to this type of evaluation, but we cannot stop there.

Therefore, training sessions should be more focused on equipping people to carry out this type of approach in a rigorous manner. There are some topics that we often wrongly assume are already understood. A few examples of these topics include: feminist, gender and human rights approaches; ethics in evaluation practice; evaluation standards; conflict resolution; qualitative social research methodologies; and qualitative data analysis strategies. Furthermore, there is a clear need to develop skills in cultural sensitivity, the facilitation of processes, critical thinking and non-violent communication. These topics may not be new to people who have studied social sciences but this is not the case for many other people who are participating in a PE for the first time and whose prior training did not cover these areas.

In keeping with these ideas, we believe it is important to consider how we can certify and include these skills in postgraduate training in evaluation. This will ensure that we are able to guarantee more rigorous and methodologically solid and inclusive evaluations. After these training sessions, participants should be able to define what they are going to evaluate, how they are going to approach the process and who they are going to involve. They should also be able to state how they are going to involve multiple stakeholders, access and interpret data, recognise the political and cultural context which is being evaluated, and take into account relevant ethical considerations at each stage as well as principles of equality. At the end of the evaluation, their findings and conclusions should represent in a meaningful and rigorous way the diverse opinions and perceptions held by different stakeholders concerning the situation being evaluated.