by Andrea Meneses

In our quest to keep moving forward, we look back to examine evaluation processes that we have accompanied or implemented. As we do so, it is common to ask ourselves about the impact of the decisions taken, the results produced and the recommendations made. It is normal to wonder if the experience has been useful. It can be even more challenging to respond to these questions when an evaluation has been carried out using a participatory approach, given that a great deal of effort in these cases is focused on capacity building and learning for all stakeholders.

In our quest to keep moving forward, we look back to examine evaluation processes that we have accompanied or implemented. As we do so, it is common to ask ourselves about the impact of the decisions taken, the results produced and the recommendations made. It is normal to wonder if the experience has been useful. It can be even more challenging to respond to these questions when an evaluation has been carried out using a participatory approach, given that a great deal of effort in these cases is focused on capacity building and learning for all stakeholders.

We found ourselves in this exact situation in 2017 at the end of a long participatory evaluation that we had accompanied in the Limón province of Costa Rica. The evaluation had brought together a number of different voices and opinions on the Cancer Prevention and Care Services run by the Costa Rican Social Security Fund. You can find out more about this experience by clicking on the following links: video 1 and video 2. You can also access the documented evaluation from this link.

After two years of intense work, we wanted to bring together the different stakeholders involved in the evaluation to reflect on the process: lessons learned, the difficulties and challenges faced and the uses the findings might be put to. To do so, we designed a participatory tool in the form of a game called the Participatory Evaluation Maze that encourages those involved to look back on the experience with a critical eye. You can access the whole tool from this link.

After two years of intense work, we wanted to bring together the different stakeholders involved in the evaluation to reflect on the process: lessons learned, the difficulties and challenges faced and the uses the findings might be put to. To do so, we designed a participatory tool in the form of a game called the Participatory Evaluation Maze that encourages those involved to look back on the experience with a critical eye. You can access the whole tool from this link.

This type of tool, known as a game to encourage thinking, creates entertaining, horizontal spaces that ‘create conditions that encourage communication and the expression of feelings, experiences information, ideas and expectations, as well as learning and the acquisition of new knowledge about different topics and situations’ (Tapella et al., 2022). Other similar tools were used during the evaluation, such as the ‘Myths and Beliefs about Cancer’ game. If you want to know more about these thought-provoking games, we recommend you read this post.

What is the Participatory Evaluation Maze?

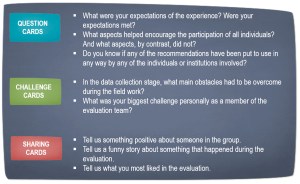

The questions on the game cards are based on the eight guiding principles of collaborative approaches to evaluation developed by Cousins et al. (2015). Such approaches seek to conduct evaluations in partnership with the people targeted by the interventions, placing their interests and information needs, as well as the context, at the centre of the whole process.

These principles are: (1) explain the motivation to collaborate, (2) foster meaningful relationships, (3) develop a shared understanding of the intervention, (4) promote appropriate participatory processes, (5) monitor and react appropriately to resource availability, (6) monitor the development and quality of the evaluation, (7) foster evaluative thinking, and (8) provide follow-up to analyse usefulness.

The Participatory Evaluation Maze adapts the indicators of each principle to form questions that enable evaluation, reflection and dialogue on the developments, difficulties and lessons learned during the participatory evaluation process. It also considers any challenges that arose.

The following table provides some examples of some cards that feature in the thought-provoking game, the Participatory Evaluation Maze:

The Participatory Evaluation Maze experience

In our evaluation of the participatory evaluation of the Cancer Prevention and Case Services in Valle de la Estrella, the game sparked conversations and highlighted the varied perspectives of the different members of the evaluation team concerning which aspects had worked well and which could be improved in similar processes in the future. Six representatives from the health boards (local organisations responsible for overseeing the administration of the Costa Rican Social Security health services) who had been part of the evaluation team, also took part in the game. The session lasted for two and a half hours. An external facilitator was at hand who did not take an active role in the game but instead ensured the smooth running of the activity, recorded the different perspectives expressed, encouraged consensus-reaching in discussions and highlighted areas of divergence.

One of the most striking findings is that the players reported a better understanding of the intervention following the evaluation. This in turn made them feel more confident about making demands or expressing their needs to the Costa Rican Social Security Fund, the implementing agency. Another key point is that they felt they gained a greater understanding of cancer issues, which translated into an increased capacity to advocate and engage with local health staff. They also highlighted some areas that could be improved in the fieldwork phase, such as the need to involve key institutional and community actors who had not been consulted, or the importance of a more active participation on the part of the health boards when it came to evaluating the results. With regard to the assessment of the use of the evaluation findings, it was noted that there had been little response to the evaluation report on the part of the authorities, something which was also linked to the limited advocacy of the stakeholders involved in disseminating the recommendations generated.

The findings generated by the game also provided an excellent starting point for rethinking the strategy for following up the recommendations, which was developed later, in 2018. Specific actions were taken to analyse the progress of the recommendations, including workshops involving political authorities and decision-makers, the health boards, other local actors and health personnel.

Thus, not only did the Participatory Evaluation Maze enable a critical reflection on the process that had taken place, aspects that could be improved and the lessons that could be taken into future experiences, it also served to propel the implementation stage.

Here is a video describing the tool and its use in participatory processes.

References

Sanz, J. C., Tapella, A. Meneses (2019) Participatory Evaluation of Cancer Prevention and Care Services. A Case Study from Valle de la Estrella, Costa Rica, in Cousins, J. Bradley (ed), Collaborative Approaches to Evaluation: Principles in Use, Chapter II, pp. 47-53, CA: Thousand Oaks – SAGE Publications.

Tapella, E., P. Rodriguez Bilella, J. C. Sanz, J. Chavez Tafur, J. Espinosa Fajardo (2022) Sowing & Harvesting. Participatory Evaluation Handbook. DEval, Bonn, Germany.